There is untapped potential in data that, if harnessed in a rights-respecting manner, could have positive implications for helping to solve societies’ biggest problems or creating new economic value.

Currently, though, much of the data needed to tackle these pressing challenges remains siloed within both public and private sources. At the same time, many jurisdictions, both in the real and digital world, lack comprehensive data protection and data security regulations to protect people’s rights and create sustainable mechanisms for data usage.

Further, institutions tend to struggle with building actionable models, leading to systems that focus more on collecting massive datasets than on unlocking the value from data that is spread throughout the institution. And, finally, there is the vexed issue of data accuracy and the need to minimize inherent biases contained in most datasets.

“Now is the time to develop responsible data practices that minimize negative impacts while ensuring the next generation of products and solutions are built in a manner that ensures the broadest benefits and minimizes harms and biases. If we do not begin to address the issues of data quality and accuracy, and understand that data must be fit for purpose, we run the risk of amplification of misinformation for generations to come,” says JoAnn Stonier, Chief Data Officer, Mastercard USA.

Transparent data architectures, inclusive systems design and a human-centred approach to how data is stored, integrated, processed and wielded can help solve these issues, by going beyond simple core principles into the technical execution of logical processes to promulgate an appropriate balance of creativity, innovation, responsible use and functionality.

“The relationship between people and their individual data, as well as collective decisions around the use of data, remain a mystery to many citizens and governments alike. Such lack of agency disempowers. As a result, companies handling data are in an advantaged position to decide for others, in the interest of profits, not of the public, what to do with data. How to make sure knowledge and understanding over the processing of data are used to achieve good governance of data, on a solid democratic values foundation, is what keeps me up at night. Solutions must include greater transparency and accountability, as well as specific requirements and oversight for sectors and applications,” says Marietje Schaake, Director, International Policy, Cyber Policy Center, Stanford University.

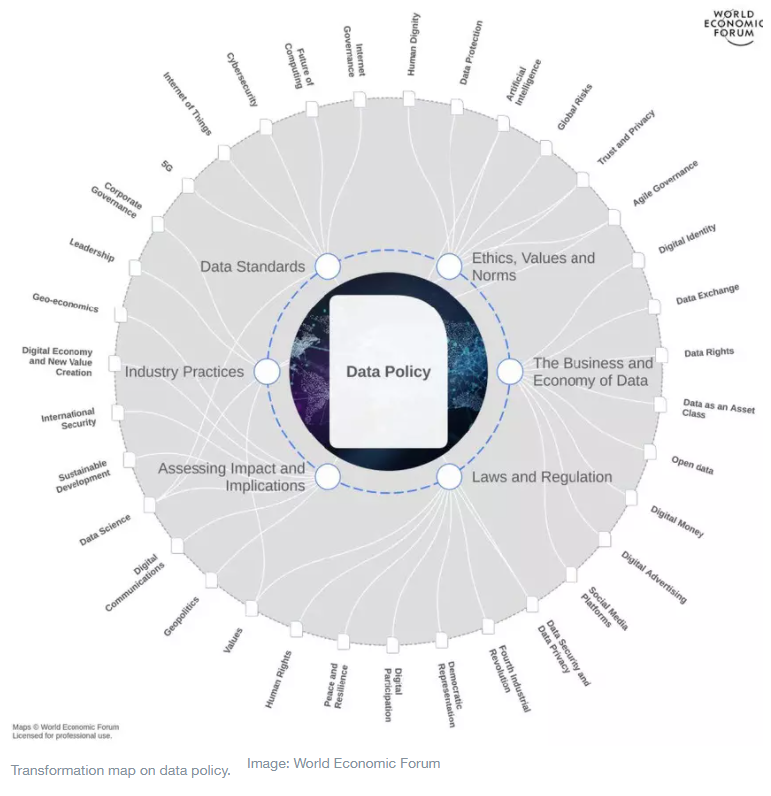

An immediate way forward in making sense of the complex and interlinked forces that are transforming the data ecosystem is to understand the key issues in data policy that will influence how all sectors of society will interact with data in the fast-evolving future of technology.

To coincide with our inaugural Global Technology Governance Summit, on 6-7 April 2021, we asked six experts: What is the key issue in data policy that is top of mind for you right now?

Bret Greenstein, Senior Vice-President, Global Markets; Head, Artificial Intelligence and Analytics, Cognizant

Countries like the US have a real opportunity to become friendly zones for companies that contribute data and insights to the broader market. Countries that do this will become havens for innovation and competitive advantage, while countries that further restrict data use and do not encourage sharing will become data deserts, falling further behind. The value of data comes from sharing and combining different perspectives to bring new insights. The biggest challenge we have is that sharing data is terrifying for business leaders for fear of losing control and competitive advantage. If the pandemic taught us anything, it is that there is power in data sharing. Bringing our data together generates insights that will create enormous value for business and society. The countries that incentivize data sharing – through tax breaks, programmatic investments, and modernized regulations – will gain significant economic advantage in the next five years.

This approach is already receiving a positive response from the US Congress, where lawmakers are proposing a number of bills aimed at bolstering US technology, such as the Chips Act, which would offer incentives to bring chip manufacturing back home, and the Endless Frontier Act to invest more broadly in technological advancement.

Lesly Goh, Fellow at Cambridge University, Former CTO at World Bank Group

In the digital economy, data has become a critical resource that can be monetized and yield significant benefits to individuals and companies. However, there are risks that the data can be stolen, misused, or manipulated without the consent of the individual who originated the information. Consumer protection guidelines and standards are well established for physical products and commercial services globally, but there are little to no established global norms and standards on how specific data harms can be reduced to protect people. There are a few challenges that make it difficult to set data protection standards, namely:

The damage inflicted on the victim of a data misuse is not immediately obvious to the victim, and thus hard to quantify in the near term.

Since data can easily cross boundaries both in the real and digital world, it is difficult to identify which jurisdiction would apply in cases where there are cross-border data privacy violations.

In the run-up to and aftermath of the events that took place on January 6, 2021 at the United States Capitol, the ubiquity of deep fakes, as well as varying forms of disinformation and misinformation across social media platforms, has placed a huge spotlight on the need for a greater focus on digital humanity in data policy. What is the victim’s redressability? And what should the role of governments and the private sector be in calling for greater accountability, transparency, and responsibility across the data ecosystem?

Brett Solomon, Co-Founder and Executive Director, Access Now

Data is about us. Yet far too often we are removed from policies that decide what governments and companies will do with our information. Innovation should serve people and be built with us. We should challenge any assumptions that empowering people harms business and innovation. In a user-led world, the contrary is true.

The “harvest it all, share it all, use it all” approach to data is empowering the tech giants, but is proving to be increasingly harmful to society writ large. We must develop new, more sustainable, rights-based ways to innovate with data. This approach should embed security and privacy by design, give agency to people, and empower them to control their information.

It’s time to be bold and put commitments for human rights in practice. It’s time to build new models that advance data rights for users and sustainability for business.

Sean Audain, City Innovation Lead, Wellington City Council, New Zealand

Our physical and digital worlds are merging as the objects that make up our homes, workplaces and public spaces become more interactive and connected. This merging of bricks and bytes means that activities such as parking a car, paying for the bus, or turning on a light have an invisible, often unthought-of digital footprint. This rise of the unconscious internet has many benefits: it is key to more sustainable energy use in cities and comprehensive management of carbon emissions through supply chains, but it also opens up questions of privacy, transparency, duty of care and security for the data created by people and communities in these environments. This question of the extent, relationships within and rights of the unconscious internet is key to continued growth of combined digital/physical environments in places and spaces worldwide.

Tricia Wang, Tech Ethnographer, Sudden Compass

We need a globally agreed-upon, interoperable definition of personal data. To do this, we don’t need more definitions of personal data, we need to change the way that we define it. We need to move from definitions that predefine what qualifies as personal data to definitions that account for the continual expansion of things that qualify as personal data. This shift would account for what data advocate Vaughn Tan calls a multilevel, multi-dimensional approach to definition making.

In a digitally mediated world, people’s data can be acted upon without their consent. And because that action can impact on how much agency they have over their lives and livelihoods, personal data is the core of our digital humanity. But current definitions of personal data are unable to keep up with the evolution of personal data. For example, a year ago, COVID-19 tests as personal data did not exist. Without a globally agreed-upon interoperable definition of personal data, no amount of regulation, advocacy, policymaking, data rights or open data projects can fix this structural issue. Companies and institutions that oversee large amounts of personal data – such as ad tech, blockchain smart-contract oracles, biometric health services and social media – are figuring it out on their own by creating consortiums, engaging in self-regulation or just doing the minimum to be legally compliant. At the other end, policymakers are implementing measures that often create more confusion and bureaucracy for companies and institutions. On the civil society side, data advocates are calling for responsible tech. All these efforts will better serve our digital humanity if we are operating in sync with our understanding of personal data.

Monique J. Morrow, Senior Distinguished Architect, Emerging Technologies, Syniverse

Hello new world! Perhaps we will need to have a privacy button developed as an option to allow for some level of randomness and serendipity. Let’s take this notion of “decentralized” and its execution a bit further. Can “things” and “people” operate autonomously where “authority” is defined within these constructs? The decentralized paradigm is a work in progress shaping a “new Internet” that indeed embeds a notion of shared ethics and where data is under the purview of the individual or, even more provocative, the evolving organism or thing. The brain as an interface to the internet has been under development for the past several years now to foster the “Brainternet”. However, with the development of portable MRIs, could we not think about the potential of “telepathic exchanges”, or brain-to-brain messaging, and if so, why would we need an internet at all? In essence we become the internet. We are the Human Internet!

Further, we can now add our own “serendipity on-off buttons” and choose to share what we wish to share and with whom we wish to share. However, will I then be able to fend off a new kind of denial-of-service attack on my brain? What is the potential for abuse in this “brave new world”, should our brains become “co-opted” by a few miscreant actors? Too far off in the future? That is important for you, as our reader, to decide.

This article was originally published on World Economic Forum under Creative Commons Licence.

The views and opinions expressed in this article are those of the author and do not necessarily reflect the views of Vision of Humanity.